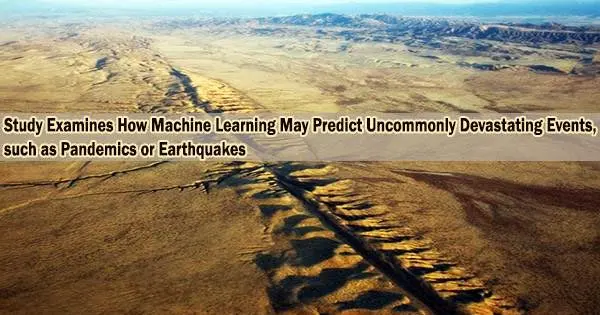

Computational modeling faces an almost insurmountable challenge when it comes to disaster prediction brought on by catastrophic events (think earthquakes, pandemics, or “rogue waves” that could demolish coastal structures): These incidents are statistically so uncommon that there is simply not enough information to employ predictive models to determine when they will occur again.

It need not be that way, according to a group of academics from Brown University and Massachusetts Institute of Technology.

In a recent study published in Nature Computational Science, the researchers describe how they trained a powerful machine learning technique to predict scenarios, probabilities, and sometimes even the timeline of rare events despite the lack of historical records for such events. They combined this technique with statistical algorithms, which require less data to make accurate, efficient predictions.

As a result, the research team discovered that this new framework can offer a way to avoid the requirement for enormous amounts of data that are typically required for these kinds of computations, effectively reducing the enormous challenge of predicting rare events to a question of quality over quantity.

“You have to realize that these are stochastic events,” said George Karniadakis, a professor of applied mathematics and engineering at Brown and a study author. “An outburst of pandemic like COVID-19, environmental disaster in the Gulf of Mexico, an earthquake, huge wildfires in California, a 30-meter wave that capsizes a ship these are rare events and because they are rare, we don’t have a lot of historical data. We don’t have enough samples from the past to predict them further into the future. The question that we tackle in the paper is: What is the best possible data that we can use to minimize the number of data points we need?”

The thrust is not to take every possible data and put it into the system, but to proactively look for events that will signify the rare events. We may not have many examples of the real event, but we may have those precursors. Through mathematics, we identify them, which together with real events will help us to train this data-hungry operator.

George Karniadakis

The solution was discovered by the researchers using a sequential sampling method termed active learning. These kinds of statistical algorithms can examine the data that is fed to them, but more crucially, they may learn from the data to classify additional relevant data points that are just as important as or even more crucial to the calculation’s output. They enable more to be done with less at the most fundamental level.

That’s critical to the machine learning model the researchers used in the study. This artificial neural network, known as DeepOnet, employs interconnected nodes in a series of layers to closely replicate the connections that neurons in the human brain make.

A well-known deep neural operator is DeepOnet. Because it is actually two neural networks combined into one that process data in two parallel networks, it is more sophisticated and powerful than conventional artificial neural networks.

As a result, once it discovers what it is looking for, it can evaluate enormous sets of data and scenarios at breakneck speed and spew out similarly enormous sets of possibilities.

Deep neural operators require a ton of data to be trained in order to perform calculations that are correct and efficient, which is the bottleneck with this potent instrument, particularly when it comes to unusual events.

The study team demonstrates in the paper that even in the absence of a large amount of data points, the DeepOnet model can be trained on the factors or precursors to look for that precede the terrible event someone is examining.

“The thrust is not to take every possible data and put it into the system, but to proactively look for events that will signify the rare events,” Karniadakis said. “We may not have many examples of the real event, but we may have those precursors. Through mathematics, we identify them, which together with real events will help us to train this data-hungry operator.”

The approach is used by the researchers to identify parameters and various probability distributions for hazardous surges during a pandemic, to locate and forecast rogue waves, and to determine when a ship will split in half as a result of stress.

For instance, the researchers discovered they could find and quantify when rogue waves will form by looking at probable wave conditions that nonlinearly interact over time, resulting in waves that are occasionally three times their original size. Rogue waves are defined as those that are larger than twice the size of surrounding waves.

The researchers discovered that their novel approach worked better than more conventional modeling attempts, and they believe it provides a framework that can quickly find and predict many types of unusual events.

In the report, the research team provides recommendations for future experiment design that will reduce expenses and improve predicting accuracy. For instance, Karniadakis is already collaborating with environmental experts to apply the cutting-edge technique to predict climate phenomena like hurricanes.

Ethan Pickering and Themistoklis Sapsis led the study from MIT. Karniadakis and other Brown researchers introduced DeepOnet in 2019. They are currently seeking a patent for the technology. The study was supported with funding from the Defense Advanced Research Projects Agency, the Air Force Research Laboratory, and the Office of Naval Research.