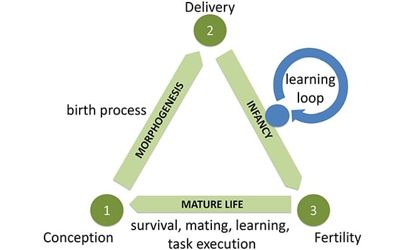

Evolutionary robotics (ER) is an experimental research field. It is a methodology that uses evolutionary computation to develop controllers and/or hardware for autonomous robots. It is a new technique for the automatic creation of autonomous robots. Algorithms in ER frequently operate on populations of candidate controllers, initially selected from some distribution. It can be considered as a subfield of robotics that aims to create more robust and adaptive robots.

Evolutionary Robotics is a method for automatically generating artificial brains and morphologies of autonomous robots. This approach is useful both for investigating the design space of robotic applications and for testing scientific hypotheses of biological mechanisms and processes.

This population is then repeatedly modified according to a fitness function. Experimentation amounts to executing an evolutionary process in a population of (simulated) robots in a given environment, with some targeted robot behavior. In the case of genetic algorithms (or “GAs”), a common method in evolutionary computation, the population of candidate controllers is repeatedly grown according to crossover, mutation, and other GA operators and then culled according to the fitness function. The targeted features are used to specify a fitness measure that drives the evolutionary process.

The candidate controllers used in ER applications may be drawn from some subset of the set of artificial neural networks, although some applications (including SAMUEL, developed at the Naval Center for Applied Research in Artificial Intelligence) use collections of “IF THEN ELSE” rules as the constituent parts of an individual controller. Drawing heavily on biology and ethology, it uses the tools of neural networks, genetic algorithms, dynamic systems, and biomorphic engineering.

The evolutionary robotics model was used to run a series of experiments on the role of various social and evolutionary variables in the emergence of shared communication. It is theoretically possible to use any set of symbolic formulations of a control law (sometimes called a policy in the machine learning community) as the space of possible candidate controllers.

Evolutionary robotics applies the selection, variation, and heredity principles of natural evolution to the design of robots with embodied intelligence. Artificial neural networks can also be used for robot learning outside the context of evolutionary robotics. In particular, other forms of reinforcement learning can be used for learning robot controllers. One of the main challenges in the robotic world is to transfer the simulated robot into reality.

Information Source: