A new AI system can read your thoughts. It enables a computer to generate images of what you’re thinking by analyzing brain signals. Your thoughts can also be interpreted and modeled by the system. A new computer can read your mind and present what you’re thinking about in the form of images by monitoring your brain.

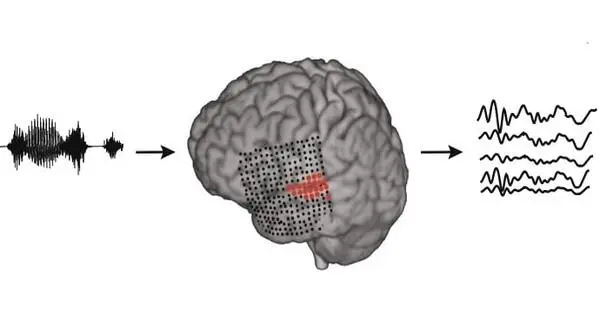

Neuroengineers have developed a system that converts thought into understandable, recognizable speech. The technology can reconstruct the words a person hears with unprecedented clarity by monitoring their brain activity. This breakthrough, which uses speech synthesizers and artificial intelligence, could pave the way for computers to communicate directly with the brain.

With surprising but still limited accuracy, artificial intelligence can decode words and sentences from brain activity. The AI guesses what a person has heard based on a few seconds of brain activity data. According to preliminary research, it lists the correct answer in its top 10 possibilities up to 73 percent of the time.

According to Giovanni Di Liberto, a computer scientist at Trinity College Dublin who was not involved in the research, the AI’s “performance was above what many people thought was possible at this stage.”

With language, that’s not going to cut it if we want to scale to practical use, the AI decoded information from participants who were passively listening to audio, which is not directly relevant to nonverbal patients. To make it a useful communication tool, scientists will need to learn how to decipher what these patients intend to say from brain activity, such as expressions of hunger, discomfort, or a simple “yes” or “no.”

Giovanni Di Liberto

Developed at the parent company of Facebook, Meta, the AI could eventually be used to help thousands of people around the world unable to communicate through speech, typing or gestures, researchers report August 25 at arXiv.org. That includes many patients in minimally conscious, locked-in or “vegetative states” — what’s now generally known as unresponsive wakefulness syndrome (SN: 2/8/19).

Most existing technologies to help such patients communicate require risky brain surgeries to implant electrodes. This new approach “could provide a viable path to help patients with communication deficits … without the use of invasive methods,” says neuroscientist Jean-Rémi King, a Meta AI researcher currently at the École Normale Supérieure in Paris.

King and his colleagues trained a computational tool to detect words and sentences on 56,000 hours of speech recordings from 53 languages. The tool, also known as a language model, learned how to recognize specific features of language both at a fine-grained level — think letters or syllables — and at a broader level, such as a word or sentence.

The team applied an AI with this language model to databases from four institutions that included brain activity from 169 volunteers. In these databases, participants listened to various stories and sentences from, for example, Ernest Hemingway’s The Old Man and the Sea and Lewis Carroll’s Alice’s Adventures in Wonderland while the people’s brains were scanned using either magnetoencephalography or electroencephalography. Those techniques measure the magnetic or electrical component of brain signals.

Then with the help of a computational method that helps account for physical differences among actual brains, the team tried to decode what participants had heard using just three seconds of brain activity data from each person. The team instructed the AI to align the speech sounds from the story recordings to patterns of brain activity that the AI computed as corresponding to what people were hearing. It then made predictions about what the person might have been hearing during that short time, given more than 1,000 possibilities.

Using magnetoencephalography, or MEG, the correct answer was in the AI’s top 10 guesses up to 73 percent of the time, the researchers found. With electroencephalography, that value dropped to no more than 30 percent. “[That MEG] performance is very good,” Di Liberto says, but he’s less optimistic about its practical use. “What can we do with it? Nothing. Absolutely nothing.”

The reason, he says, is that MEG requires a bulky and expensive machine. Bringing this technology to clinics will require scientific innovations that make the machines cheaper and easier to use.

It’s also important to understand what “decoding” really means in this study, says Jonathan Brennan, a linguist at the University of Michigan in Ann Arbor. The word is often used to describe the process of deciphering information directly from a source — in this case, speech from brain activity. But the AI could do this only because it was provided a finite list of possible correct answers to make its guesses.

“With language, that’s not going to cut it if we want to scale to practical use,” Brennan says.

Furthermore, according to Di Liberto, the AI decoded information from participants who were passively listening to audio, which is not directly relevant to nonverbal patients. To make it a useful communication tool, scientists will need to learn how to decipher what these patients intend to say from brain activity, such as expressions of hunger, discomfort, or a simple “yes” or “no.” King agrees that the new study is about “decoding of speech perception, not production.” Though speech production is the ultimate goal, “we’re quite a long way away” for the time being.