AMOLF’s Soft Robotic Matter group has demonstrated that a group of small autonomous, self-learning robots can easily adapt to changing circumstances. They linked these simple robots in a line, and then each individual robot taught itself to move as quickly as possible. The findings were published in the scientific journal PNAS today.

Robots are ingenious machines that can accomplish a great deal. To name a few, there are robots that can dance and walk up and down stairs, as well as swarms of drones that can fly in formation independently. All of those robots, however, are programmed to some extent—different situations or patterns have been pre-programmed into their brains, they are centrally controlled, or a complex computer network teaches them behavior through machine learning. Bas Overvelde, Principal Investigator of AMOLF’s Soft Robotic Matter group, wanted to go back to basics and create a self-learning robot that is as simple as possible. “Ultimately, we want to be able to use self-learning systems built from simple building blocks, such as a polymer. These are also known as robotic materials.”

Researchers have shown that a group of small autonomous, self-learning robots can adapt easily to changing circumstances. The researchers succeeded in getting very simple, interlinked robotic carts that move on a track to learn how they could move as fast as possible in a certain direction.

The researchers were able to create very simply, interconnected robotic carts that moved on a track to learn how to move as fast as possible in a specific direction. The carts did this despite not having been programmed with a route or knowing what the other robotic carts were doing. “In the design of self-learning robots, this is a new way of thinking. In contrast to most traditional, programmed robots, this type of simple self-learning robot does not require any complex models to adapt to a rapidly changing environment “Overvelde explains. “In the future, this could have an application in soft robotics, such as robotic hands that learn how different objects can be picked up or robots that automatically adapt their behavior after incurring damage.”

Breathing robots

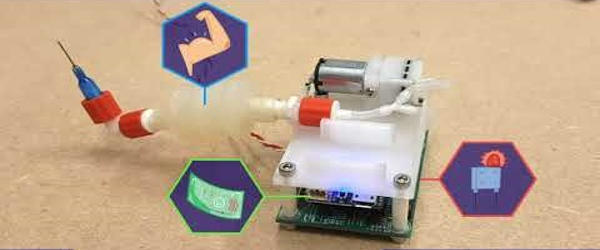

The self-learning system is made up of a series of linked building blocks a few centimeters in size, known as individual robots. These robots are made up of a microcontroller (minicomputer), a motion sensor, a pump that pumps air into bellows, and a needle that allows the air to escape. This combination allows the robot to “breathe.” When you connect a second robot to the first’s bellows, they push each other away. That is what propels the entire robotic train. “We wanted the robots to be as simple as possible, so we used bellows and air. This method is used by many soft robots “Luuk van Laake, a Ph.D. student, says.

The only thing the researchers do ahead of time tells each robot a simple set of rules with a few lines of computer code (a short algorithm): switch the pump on and off every few seconds (this is called the cycle) and then try to move as quickly as possible in a certain direction. The robot’s chip continuously measures its speed. Every few cycles, the robot makes minor adjustments to when the pump is activated and determines whether these changes cause the robotic train to move faster. As a result, each robotic cart is constantly conducting small experiments.

If you allow two or more robots to push and pull each other in this manner, the train will eventually move in a single direction. As a result, the robots learn that this is the best setting for their pump without the need for communication or precise programming on how to proceed. The system gradually improves itself. The videos included with the article show the train moving slowly but steadily in a circular path.

Tackling new situations

The researchers tested two versions of the algorithm to see which performed better. The first algorithm saves the robot’s best speed measurements and uses them to determine the best pump setting. The second algorithm only considers the most recent speed measurement to determine the best time to turn on the pump in each cycle. That latter algorithm performed far superiorly. It can deal with situations without having them pre-programmed because it doesn’t waste time on behavior that may have worked well in the past but no longer does so in the new situation.

It could, for example, quickly overcome an obstacle on the trajectory, whereas robots programmed with the other algorithm came to a halt. “If you find the right algorithm,” Overvelde says, “this simple system becomes very robust.” “It can handle a variety of unexpected situations.”

Pulling off a leg

The researchers believe the robots have come to life, no matter how simple they may be. They wanted to damage a robot in one of the experiments to see how the entire system would recover. “We took out the needle that serves as the nozzle. That struck me as odd. As if we were yanking its leg off.” In the case of this maiming, the robots also adapted their behavior so that the train could once again move in the right direction. It was yet another example of the system’s sturdiness.

The system is simple to scale; the researchers have already created a moving train of seven robots. The next step is to construct robots capable of more complex behavior. “An octopus-like construction is one such example,” says Overvelde. “It will be interesting to see if the individual building blocks behave like octopus arms. They, like our robotic system, have a decentralized nervous system, a sort of independent brain.”