The rise of artificial intelligence (AI) and machine learning (ML) has resulted in a computing crisis and an urgent need for more hardware that is both energy-efficient and scalable. Making decisions based on incomplete data is a critical step in both AI and ML, and the best approach is to output a probability for each possible answer. Current classical computers are incapable of doing so in an energy-efficient manner, which has prompted a search for novel computing approaches. Quantum computers, which use qubits, may be able to help meet these challenges, but they are extremely sensitive to their surroundings, must be kept at extremely low temperatures, and are still in the early stages of development.

Kerem Camsari, an assistant professor of electrical and computer engineering (ECE) at UC Santa Barbara, believes that probabilistic computers (p-computers) are the answer. P-computers are powered by probabilistic bits (p-bits), which interact with other p-bits in the same system. Unlike bits in traditional computers, which are either 0 or 1, or qubits, which can be in more than one state at the same time, p-bits fluctuate between positions and operate at room temperature. Camsari and his colleagues discuss their project, which demonstrated the promise of p-computers, in an article published in Nature Electronics.

“We showed that inherently probabilistic computers, built out of p-bits, can outperform state-of-the-art software that has been in development for decades,” said Camsari, who received a Young Investigator Award from the Office of Naval Research earlier this year.

The initial findings, combined with our most recent results, imply that building p-computers with millions of p-bits to solve optimization or probabilistic decision-making problems with competitive performance may be just around the corner.

Kerem Camsari

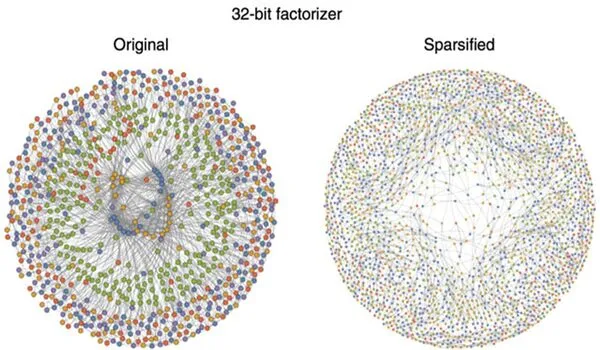

Camsari’s team worked with researchers from the University of Messina in Italy, Luke Theogarajan, vice chair of UCSB’s ECE Department, and physics professor John Martinis, who led the team that built the world’s first quantum computer to achieve quantum supremacy. The researchers achieved their promising results by combining traditional hardware with domain-specific architectures. They created a one-of-a-kind sparse Ising machine (sIm), a novel computing device used to solve optimization problems while consuming the least amount of energy.

The sIm, according to Camsari, is a collection of probabilistic bits that can be thought of as people. And each person has only a small group of trusted friends, which are the machine’s “sparse” connections.

“People can make decisions quickly because they each have a small group of trusted friends and don’t have to hear from everyone in an entire network,” he explained. “The process by which these agents reach consensus is similar to that used to solve a difficult optimization problem with multiple constraints. Sparse Ising machines enable us to formulate and solve a wide range of optimization problems on the same hardware.”

A field-programmable gate array (FPGA) was used in the team’s prototyped architecture, which is a powerful piece of hardware that offers far more flexibility than application-specific integrated circuits. “Imagine a computer chip that allows you to program the connections between p-bits in a network without creating a new chip,” Camsari explained.

The researchers demonstrated that their sparse architecture in FPGAs was up to six orders of magnitude faster and had sampling speeds that were five to eighteen times faster than those achieved by optimized algorithms used on traditional computers.

Furthermore, they claimed that their sIm achieves massive parallelism, with the number of p-bits scaling linearly with the number of flips per second. Camsari returns to the example of trusted friends attempting to make a decision.

“The key issue is that reaching a consensus requires strong communication among people who are constantly talking with one another based on their latest thinking,” he explained. “A consensus cannot be reached and the optimization problem is not solved if everyone makes decisions without listening.”

In other words, the faster the p-bits communicate, the faster they can reach a consensus, which is why increasing the flips per second while ensuring that everyone listens to each other is critical. “This is exactly what we aimed for with our design,” he said. “We parallelized the decision-making process by ensuring that everyone listens to each other and limiting the number of ‘people’ who could be friends with each other.”

Their work also demonstrated the ability to scale p-computers up to 5,000 p-bits, which Camsari regards as extremely promising, despite the fact that their ideas are only one piece of the p-computer puzzle. “These results were the tip of the iceberg for us,” he explained. “We used existing transistor technology to emulate our probabilistic architectures, but the benefits of using nanodevices with much higher levels of integration to build p-computers would be enormous. This is what is keeping me awake at night.”

An 8 p-bit p-computer that Camsari and his collaborators built during his time as a graduate student and postdoctoral researcher at Purdue University initially showed the device’s potential. Their article, published in 2019 in Nature, described a ten-fold reduction in the energy and hundred-fold reduction in the area footprint it required compared to a classical computer. Seed funding, provided in fall 2020 by UCSB’s Institute for Energy Efficiency, allowed Camsari and Theogarajan to take p-computer research one step further, supporting the work featured in Nature Electronics.

“The initial findings, combined with our most recent results, imply that building p-computers with millions of p-bits to solve optimization or probabilistic decision-making problems with competitive performance may be just around the corner,” Camsari said. The research team hopes that one day, p-computers will be able to handle a specific set of naturally probabilistic problems much faster and more efficiently.