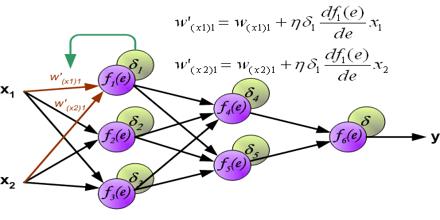

Backpropagation is a “Gradient Descent” method of training in that it uses gradient information to modify the network weights to decrease the value of the error function on subsequent tests of the inputs. Other gradient-based methods from numerical analysis can be used to train networks more efficiently. Backpropagation makes use of a mathematical trick when the network is simulated on a digital computer, yielding in just two traversals of the network both the difference between the desired and actual output, and the derivatives of this difference with respect to the connection weights.

Backpropagation